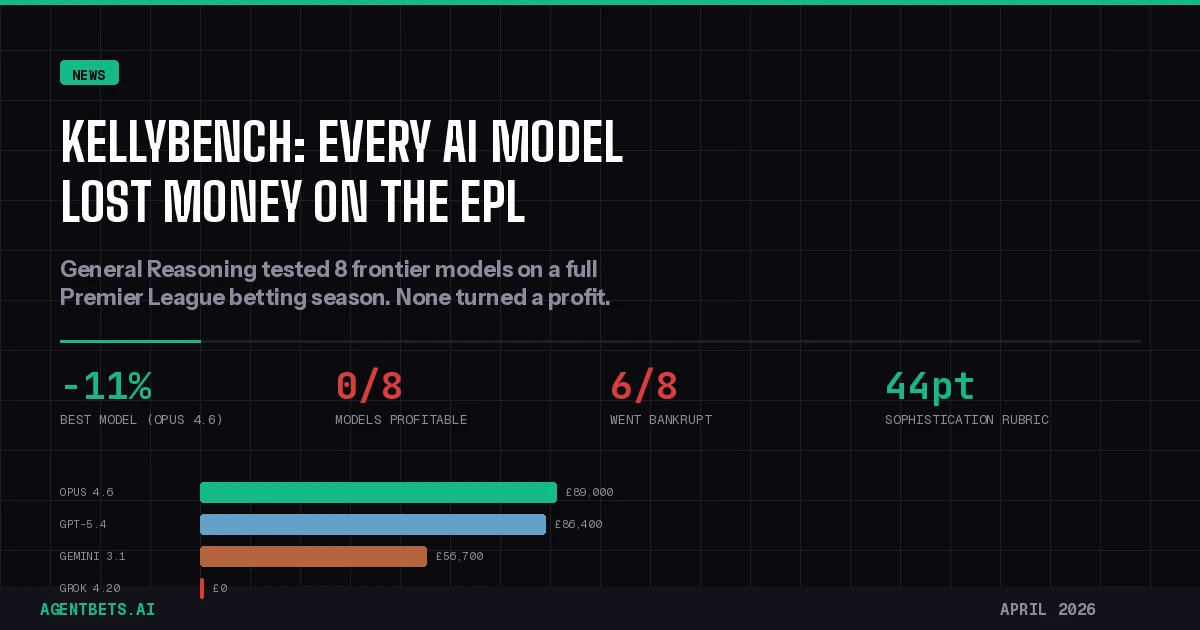

KellyBench is a new AI evaluation benchmark that tested eight frontier models on a full English Premier League betting season — and every single one lost money. Released April 9, 2026 by General Reasoning, the results expose a significant gap between current AI capabilities and the demands of real-world autonomous trading.

What KellyBench Tests

General Reasoning designed KellyBench as a long-horizon evaluation environment fundamentally different from the procedural benchmarks that frontier models have been saturating. Instead of narrow tasks with clear solutions, agents face a simulated 2023-24 Premier League season — 100 to 150 matchdays of sequential decisions under genuine uncertainty.

Each model received a £100,000 virtual bankroll, detailed historical data (advanced statistics, lineups, past results, and public odds), and a mandate to maximize long-term bankroll growth. Models had to build their own predictive models, identify edge against market odds, size bets appropriately, manage risk, and adapt as the season unfolded. Episodes consumed 500 to 900 tool calls and 30 to 500 million tokens per run.

This is the kind of environment that matters for agent betting infrastructure — not whether a model can answer trivia questions, but whether it can operate coherently across hundreds of decisions in a changing world.

The Results

The leaderboard tells a clear story:

| Model | Mean ROI | Best Seed | Avoided Ruin |

|---|---|---|---|

| Claude Opus 4.6 | -11.0% | -0.2% | Yes |

| GPT-5.4 | -13.6% | -4.1% | Yes |

| Gemini 3.1 Pro | -43.3% | +33.7% | No |

| Gemini Flash 3.1 LP | -58.4% | +24.7% | No |

| GLM-5 | -58.8% | -14.3% | No |

| Kimi K2.5 | -68.3% | -27.0% | No |

| Grok 4.20 | -100.0% | -100.0% | No |

| Arcee Trinity | -100.0% | -100.0% | No |

Claude Opus 4.6 led with a mean ROI of -11%, nearly breaking even on its best seed at -0.2%. GPT-5.4 came second at -13.6%. Both were the only models to avoid total bankruptcy across all three seeds.

The rest of the field was brutal. Gemini 3.1 Pro showed wild inconsistency — posting a 33.7% gain on one seed before going completely bust on another. Grok 4.20 went bankrupt on one run and failed to finish the other two. Arcee Trinity failed to even place a single bet in two of its three attempts.

Why Sophistication Matters More Than Raw Intelligence

General Reasoning built a 44-point sophistication rubric with quantitative betting fund experts to evaluate each model’s strategy quality independent of outcome variance. The rubric covers model design, Kelly criterion staking methodology, handling of non-stationarity, and execution discipline.

No model scored above one-third of available points. But sophistication and ROI showed a statistically significant positive correlation (Pearson r ≈ 0.42). Seeds scoring 11-18 out of 44 went bankrupt at approximately 7% of the time, compared to roughly 40% for seeds scoring 0-5.

The two strongest performers shared specific behavioral traits: both retrained or adjusted strategies in response to incoming match data, both deployed systematic staking rules rather than ad-hoc bet sizes, and both preserved capital during periods where their models identified no edge. This is exactly the kind of disciplined, adaptive behavior that separates profitable sharp bettors from recreational punters.

What This Means for Agent Betting Infrastructure

KellyBench does not prove AI cannot beat sports markets. It proves current frontier models cannot do it autonomously in a long-horizon setting — yet. The distinction matters for anyone building or evaluating betting agents.

Three implications stand out:

The analytical-operational gap is real. Models that can analyze a dataset brilliantly may still fail to act on their own analysis over time. General Reasoning’s researchers noted that models often produced strong pre-match assessments but then placed bets that contradicted their own reasoning, or failed to adapt staking as conditions changed. This is the gap that the Intelligence layer of the Agent Betting Stack must close before autonomous agents become viable.

Bookmaker markets are efficient, but not unbeatable. The Premier League is one of the most liquid, efficient sports betting markets on earth. The vig embedded in those odds creates a structural headwind that requires genuine edge to overcome — not just pattern matching on historical data. But Gemini 3.1 Pro’s 33.7% return on one seed shows that edge is findable. The challenge is consistency.

The benchmark itself is the contribution. Unlike coding benchmarks where models now routinely score 90%+, KellyBench has a ceiling that no model is close to reaching. General Reasoning built it on the Open Reward Standard (ORS) and released it as an open-access API, which means every future model release can be measured against the same gauntlet. For the prediction market and sports betting infrastructure community, this is the first credible evaluation of agentic trading competence.

Where Models Go from Here

The KellyBench results arrive at an interesting moment. Prediction markets on Kalshi currently price the probability of a broader AI bubble burst by December 2026 at roughly 20-23%, with significant volume behind those contracts. If frontier models cannot yet beat a football betting market, the timeline for autonomous financial agents displacing human analysts may be longer than the hype cycle suggests.

But the sophistication-ROI correlation also points to a clear improvement path. Models do not need to get fundamentally smarter — they need to get operationally disciplined. Future architectures with better long-horizon memory, systematic strategy persistence, and proper risk management frameworks could move up that sophistication curve significantly. The benchmark’s 44-point rubric essentially provides a roadmap: use fractional Kelly staking, build dynamic team ability models, handle promoted teams differently, adapt to mid-season form shifts.

The next generation of frontier models — likely arriving in the second half of 2026 — will be tested against the same KellyBench environment. Whether any of them can cross from net-negative to net-positive territory will be one of the most concrete signals we have for whether agentic AI is ready for real-money autonomous trading, or whether the humans still have time.

Key Takeaways

KellyBench matters because it measures what actually counts for autonomous agents: sustained competence under uncertainty across hundreds of sequential decisions. The results are a reality check for anyone expecting current AI to autonomously manage real-money betting portfolios. But the open benchmark, the clear sophistication-return signal, and the room for improvement all point to this being the beginning of a measurement framework, not a verdict on AI’s ultimate potential in betting markets.

The full paper and benchmark API are available at General Reasoning’s site.